Kenyan workers are still the underpaid labor behind A.I. training, moderation, and sex chatbots. The Data Labelers Association is fighting back.

Originally published in 404 Media .

Every day, Michael Geoffrey Asia spent eight consecutive hours at his laptop in Kenya staring at porn, annotating what was happening in every frame for an AI data labeling company. When he was done with his shift, he started his second job as the human labor behind AI sex bots, sexting with real lonely people he suspected were in the United States. His boss was an algorithm that told him to flit in and out of different personas.

“It required a lot of creativity and fast thinking. Because if I’m talking to a man, I’m supposed to act like a woman. If I’m talking to a woman, I need to act like a man. If I’m talking to a gay person, I need to act like a gay person,” he told me at a coworking space I met him at in Nairobi. After doing this for months, he, like other data labelers, developed insomnia, PTSD, and had trouble having sex.

“It got to a point where my body couldn’t function. Where I saw someone naked, I don’t even feel it. And I have a wife, who expects a lot from you, a young family, she expects a lot from you intimately. But you can’t, like, do it,” Asia said. “It fractured a lot of things for me. My body is like, not functioning at all.”

Asia eventually hit a breaking point and stopped working for AI companies. He is now the secretary general of a Kenyan organization called the Data Labelers Association (DLA) and the author of “The Emotional Labor Behind AI Intimacy ,” a testimony of his time working as the real human labor behind AI sex bots. As part of the DLA, Asia has been working to organize workers to fight for better pay, better mental health services, an end to draconian non-disclosure agreements, and better benefits for a workforce that often earns just a few dollars a day. Data labelers train, refine, and moderate the outputs of AI tools made by the largest companies in the world, yet they are wildly underpaid and haven’t benefitted from the runaway valuations of AI companies.

VIDEO

Last month, the DLA held one of its largest events at the Nairobi Arboretum, sign up new members, and to help them tell their stories.

These workers are required to stare at horrific content for many hours straight with few mental health resources, are largely managed by opaque algorithms, and, crucially, are the workers powering the runaway valuations of some of the richest and most powerful companies in the world.

“You can’t understand where you’re positioned if you don’t understand your history,” Angela, one of the day’s speakers, told the workers who had assembled there (many of the speakers at the event did not give their full names). “When you think of colonialism, we were under British Imperial East Africa Company […] so literally, we are working under a company. We are just products, part of their operation. Stakeholders, we can say, but we are at the bottom of the bottom.”

“These multinationals are coming to rule and dominate here,” she added. “It’s a very unfortunate supply chain, and my call today as data labelers is to build up on this—as we are fighting for labor rights, we are also fighting for the environment […] we are fighting big companies. We are fighting the British imperialist companies of today. It’s Apple, it’s Meta, it’s Gemini. Those are the ones we’re still fighting. It’s a call for solidarity and expanding our thinking beyond what we are doing, beyond our labor.”

In my few days in Kenya earlier this year, where I was traveling to speak at a conference about AI and journalism, it was immediately clear that data labelers make up a significant portion of the country’s tech workforce. Nearly everyone I spoke to there had either been a data labeler (or a content moderator) themselves or knows someone who has. Leaving the airport in Nairobi, you immediately drive by Sameer Business Park, an office complex that houses Sama, a San Francisco-headquartered “data annotation and labeling company” that has contracted with Meta, OpenAI, and many other tech giants. Sama has been sued repeatedly for its low pay and the fact that many of its workers suffer PTSD from repetitively looking at graphic content. For years, a giant sign outside its office read: “Samasource THE SOUL OF AI.” My Uber driver asked why I was going to a random office building in Nairobi’s Central Business District—I told her I was going to interview a data labeler. “Oh, I do data labeling too,” she said.

VIDEO

Michael Geoffrey Asia. Image: Jason Koebler Asia studied air cargo management in university. He graduated and expected to find a job planning out cargo and baggage routes, but couldn’t find a job because he graduated into an industry ravaged by COVID. Around this time, his child was diagnosed with lymphatic cancer, and he took out a loan of about $17,000 USD to pay for his treatments. He needed work, and found data labeling.

“It wasn’t offering good pay, to be honest,” Asia told me. “It was around $240 US dollars per month. But I felt like I didn’t have an option, I had a financial crisis, a sick child.”

Asia took a job at Sama, where he worked on various Meta projects. “You’re given a video and then told to describe the video, or you’re given pictures of people and told to identify faces. You’re supposed to draw bounding boxes around the faces and label that.” Last week, Sweden’s Svenska Dagbladet reported that Kenyan data labelers for Sama have been viewing and annotating uncensored footage from Meta’s AI camera glasses, which has included highly sensitive and violent footage.

Asia, through a group of colleagues and friends who called themselves “the Brotherhood,” eventually found another data labeling job that let him work from home. “We were a group of six friends, and everyone had to bring three job opportunities on a weekly basis,” he said. “I came across another gig that ended up not being a good one, where I had to annotate pornography.”

At this job, Asia went frame-by-frame in porn videos to annotate what was happening and what type of porn category it could possibly be. “You’re supposed to put yourself in the minds of the 8 billion people on Earth, every second of that video. So I may have someone searching for this pornography in Cuba and think ‘these are the tags they can use,’ if you’re searching ‘doggy,’ you know, that kind of thing,” he said. “So I worked on pornography for eight hours a day, and I did that project for eight months.” His ‘boss’ at the time was essentially a no-reply email with a link sent each day that gave him his work.

At the same time, Asia picked up a second job that started immediately after his shift tagging porn ended, where he was “training” AI companion bots, though he had no way of knowing which company he was actually working for. He quickly surmised that he was simply taking on the persona of different AI sex bots and was sexting with real people in real time.

“I could feel the human aspect in the conversations. Most of the people on the other side were lonely people,” he said. “I would have several profiles and the profiles are switching constantly depending on the needs of the person who pops up on your dashboard. I’d be sitting here talking to an old woman who needs love, but if she goes offline, another conversation pops up and then I’m responding to a gay person.”

The two jobs, done back to back, caused him to have insomnia, PTSD, and trouble having sex. Some data labelers, he said, work 18 hours a day. When I met him, he said he had essentially gone three full days without sleep because his body still hasn’t readjusted from his messed up schedule.

Asia said he eventually was able to get mental health counseling through his child’s cancer center, which started because he was the caregiver of a child with cancer but quickly turned into therapy for PTSD related to his job. “It was of immense help to me as a person, it was one of the best services I’ve ever gotten, because they stood with me, and I said ‘I need a solution to this.”

“We need technology, but it shouldn’t come at a human cost. What is so hard with offering mental support to the people working on graphic content? If this job was done in the U.S., would they do what they are doing in Kenya? Would they still give the pay they’re giving here? Here we are paid $.01 per task—it doesn’t make sense. Why this discrimination? If they can pay people in the U.S., well that means they can pay people in Kenya,” Asia said.

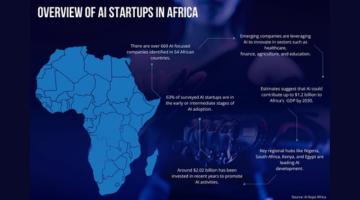

The message of many data labelers and of the lawyers who have been helping them is that artificial intelligence is not a magical tool built by people in San Francisco making millions of dollars a year and pushing their companies to insane valuations. Artificial intelligence is an extractive technology that relies on the brutal labor of underpaid workers around the world. For years, the work of African data labelers has been more or less “ghost work,” the unseen, hidden labor that lets American tech companies build their products.

“AI can never be AI without humans. It is not artificial intelligence. It’s African intelligence,” Asia said. “Most of these are dirty jobs and most of these jobs have been done here in Africa. And then once you’re done, once a tool is functional, all the communication stops. You get locked out. We are training our own death. We train ChatGPT and it’s killing us slowly.”

Draconian nondisclosure agreements and terms of services that workers can’t opt out from have created a culture of fear, and one of DLA’s goals is to make it easier for workers to speak out. At the time I met Asia in January, the DLA had 870 members, but its ranks have been growing quickly.

“I’m doing this from a point of experience, not assumption. I have been through this. I know what I’m talking about,” Asia said. “We have this monster called the NDA. The NDA is a slave tool used to enslave people to not speak about what they’re going through. I’m very much ready for any legal battle [associated with NDAs] because we’re not going to keep quiet. This is us suffering, and we can’t suffer in silence. This is not the colonial period. I have the right to speak against any violation [of my rights] and that’s what I’m doing.”

Mercy Mutemi, a workers’ rights lawyer who has sued several big tech companies including Meta for how they treat content moderators and data labelers, told me that when something happens in the United States—when a new gadget or product or feature or policy is launched, there’s a corresponding reaction in Africa.

“When something happens in the U.S., there’s an African cost to that,” she said. “Kenya has been pushing for trade deals with the U.S., right? And the direction that conversation is taking is about immunity and protection for big tech. It’s like, ‘You want any business with us at all? Well, you’ve got to get Meta out of these cases.’”

Mutemi has been working on the Meta lawsuit, and on pushing back against NDAs so that workers can more freely talk about their experiences. Tech companies “get people in a mental jail where they feel like they can’t talk about this. But NDAs are nonsensical—our laws don’t recognize these types of NDAs,” she said. “There’s a way to go about this where it’s not exploitative.”

Back at the arboretum in Nairobi, the message to DLA’s members is largely that their work is important, that it’s human, and that they deserve better.

“Africa is at the bottom of the supply chain of AI. But right now, the fact that we are all here and most of you are data labelers—you are the people who supply the labor. When we think of the whole AI ecosystem, who’s an engineer, and maybe that’s the image of AI that the majority of the world has,” Angela said. “And that’s actually very intentional. To make [your labor] invisible, to make AI look like this shiny object that no one understands, it’s very automatic and beautiful and tech. That’s the intentionality of hiding the labor and the behind the scenes of AI.”

Jason is a cofounder of 404 Media. He was previously the editor-in-chief of Motherboard. He loves the Freedom of Information Act and surfing.